What Voice Chatbots Are and How They Work in 2026

A voice chatbot is an AI-powered software system that conducts conversations using spoken language instead of (or in addition to) text. Unlike simple IVR phone trees that force callers to press buttons, modern voice chatbots understand natural speech, interpret intent, and respond with synthesized human-sounding voices — all in real time.

The Technology Stack Behind Voice Chatbots

Voice chatbots combine several AI technologies working together in under 500 milliseconds:

- Automatic Speech Recognition (ASR): Converts spoken words into text. Modern ASR engines from Google, AWS, and OpenAI achieve 95%+ accuracy in most languages and handle accents, background noise, and conversational speech far better than systems from even two years ago.

- Natural Language Understanding (NLU): Interprets the text to identify the speaker's intent, extract entities (dates, names, product IDs), and understand context. This is the same AI/NLP engine used in text chatbots, but tuned for the patterns of spoken language.

- Dialog Management: Determines the appropriate response based on intent, conversation history, and business rules. Handles multi-turn conversations where context carries across multiple exchanges.

- Text-to-Speech (TTS): Converts the response text into natural-sounding speech. In 2026, leading TTS engines produce voices virtually indistinguishable from human speech, with appropriate pauses, emphasis, and intonation.

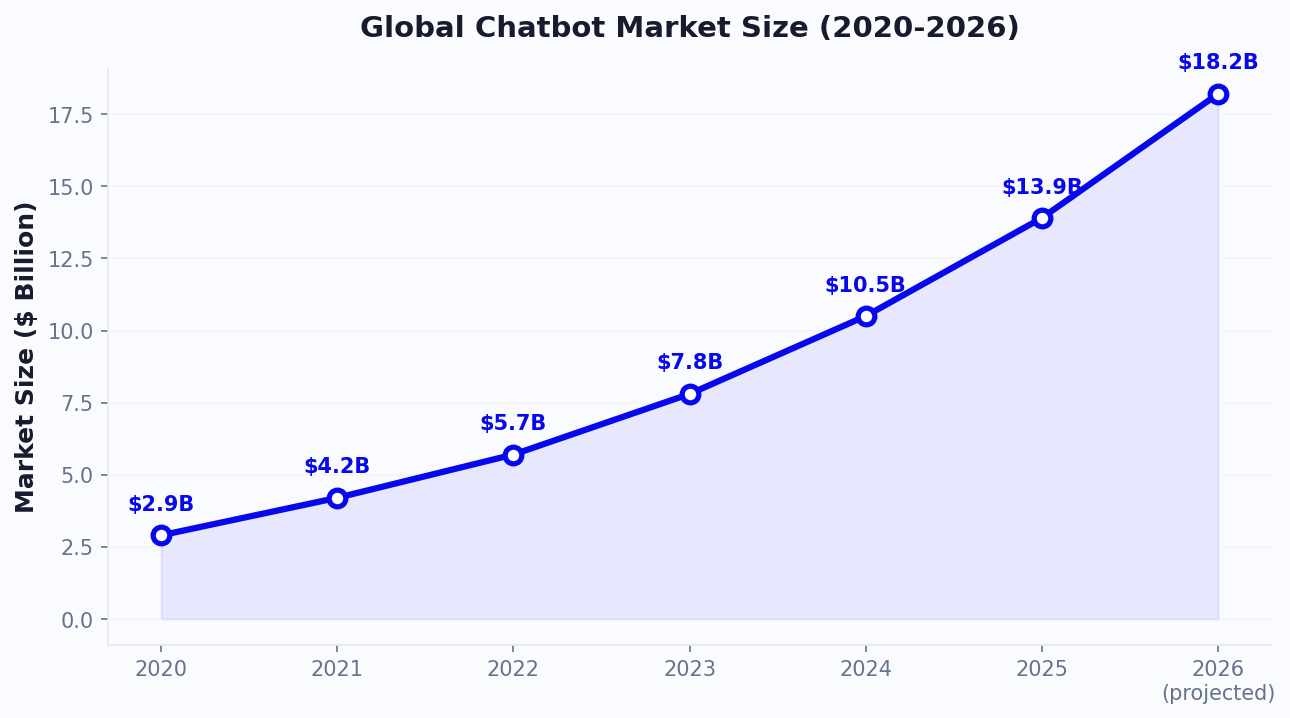

The Current State of the Market

The voice chatbot market has matured significantly. There are now 157 million voice assistant users in the United States alone, and consumers are increasingly comfortable interacting with AI by voice. Voice commerce — purchasing products through voice interfaces — is projected to exceed $100 billion globally by end of 2026.

For businesses, this shift means voice is no longer experimental. It is a production-ready channel for customer service, sales, and operations. The question is no longer "does voice AI work?" but rather "is voice the right channel for my specific use case?"

Key players in the voice chatbot space include Google Dialogflow CX, Amazon Lex, Microsoft Azure Bot Service, and specialized platforms like Voiceflow, Replicant, and PolyAI. Platform choice depends heavily on use case, integration requirements, and budget — which we will explore in the cost section below.

Voice vs Text Chatbots: A Detailed Comparison

Voice and text chatbots are not interchangeable. Each excels in different contexts, and choosing the wrong modality wastes budget and frustrates users. Here is a detailed comparison across every dimension that matters.

User Experience Comparison

| Dimension | Voice Chatbot | Text Chatbot |

|---|---|---|

| Speed of input | 150 words/minute (speaking) | 40 words/minute (typing) |

| Speed of output | Real-time audio (linear consumption) | Instant text (scannable) |

| Hands-free capability | Full hands-free operation | Requires screen and typing |

| Information density | Low (must listen sequentially) | High (scan, scroll, compare) |

| Privacy | Low (others can overhear) | High (silent interaction) |

| Multitasking | Excellent (talk while doing other things) | Moderate (requires visual attention) |

| Accessibility | Better for vision-impaired, elderly, low-literacy | Better for hearing-impaired, noisy environments |

| Rich media support | None (audio only) | Images, videos, carousels, buttons |

| Error recovery | Harder (must re-explain verbally) | Easier (edit text, use buttons) |

Technical Comparison

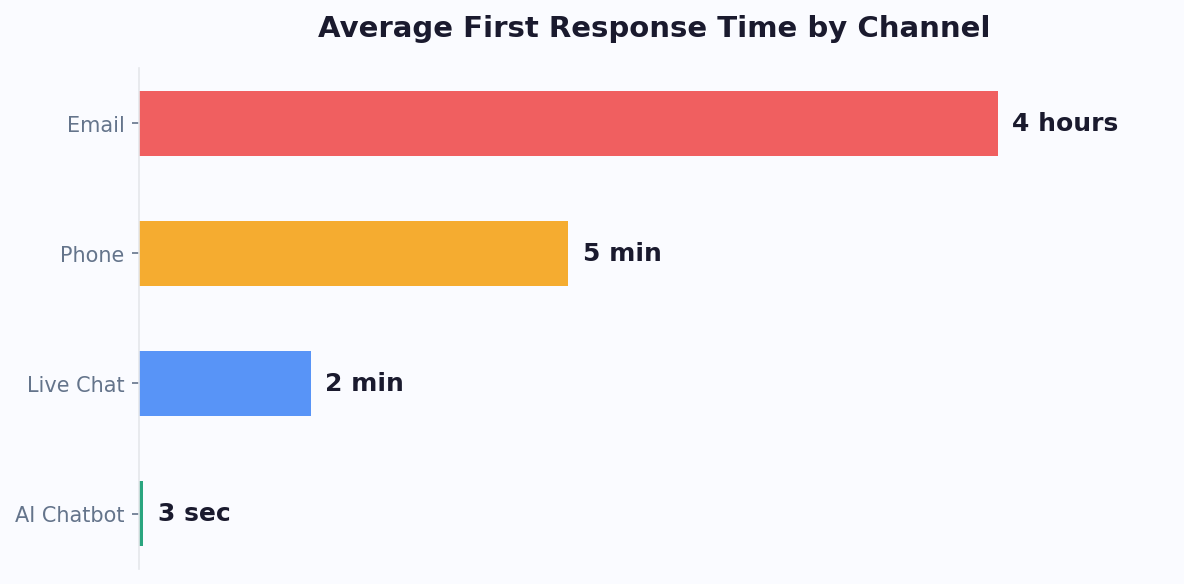

Accuracy: Text chatbots have an inherent accuracy advantage because they skip the ASR step. There is no speech recognition error to compound the NLU interpretation. Voice chatbots must handle accents, background noise, homophones, and speech disfluencies — adding a 3-8% error rate before intent classification even begins.

Latency: Voice chatbots require ASR + NLU + TTS processing, adding 300-800ms of latency compared to text chatbots that only need NLU processing. While sub-second latency is achievable, it requires careful optimization and higher-cost infrastructure.

Development complexity: Voice chatbots require 2-3x more development effort than equivalent text chatbots. You need to design for speech-specific challenges: handling interruptions, managing silence, dealing with "um" and "uh" filler words, and creating prompts that sound natural when spoken aloud.

Cost Comparison

Voice chatbots cost 3-5x more than text chatbots for equivalent functionality. The premium comes from ASR/TTS processing costs, telephony infrastructure, higher development complexity, and the need for more extensive testing across accents and acoustic conditions.

Text chatbots deployed on WhatsApp, Instagram, and Messenger leverage existing messaging infrastructure with minimal additional cost. Voice chatbots require dedicated telephony or voice platform integration, which adds ongoing per-minute charges.

Top Use Cases: Where Voice Chatbots Deliver the Most Value

Voice chatbots are not universally better or worse than text — they are specifically better for certain use cases. Here are the scenarios where voice delivers clear advantages.

1. IVR Replacement

Traditional IVR (Interactive Voice Response) systems are universally hated. "Press 1 for billing. Press 2 for support. Press 3 for..." — customers endure 4-7 menu levels before reaching the right department, with 67% of callers attempting to bypass the IVR entirely by pressing 0 repeatedly.

Voice chatbots replace this with natural conversation: "Hi, how can I help you today?" The caller says "I need to check on my order" and the voice bot routes them instantly — no menus, no button pressing. Companies that replace IVR with voice chatbots report 35-50% reduction in call handling time and 25-40% improvement in customer satisfaction scores.

This is the single highest-ROI use case for voice chatbots because the baseline experience (traditional IVR) is so poor that any improvement is dramatic.

2. Drive-Through and In-Store Ordering

Quick-service restaurants have adopted voice chatbots for drive-through ordering at scale. Chains like Wendy's, Taco Bell, and Checkers have deployed or piloted voice AI that takes orders, upsells, and processes payments — all by voice. The results: order accuracy above 95%, consistent upselling that increases average order value by 10-15%, and elimination of staffing challenges for the drive-through window.

This extends beyond restaurants to any business where customers interact hands-free: pharmacies, warehouses, automotive service centers, and retail self-checkout kiosks.

3. Accessibility and Inclusivity

For users with visual impairments, motor disabilities, or low literacy, voice chatbots are not a convenience — they are a necessity. Over 2.2 billion people globally have vision impairment, and screen readers for text chatbots provide a clunky experience. Voice chatbots offer natural, barrier-free interaction.

Similarly, elderly users who struggle with small screens and typing find voice interfaces significantly more accessible. Businesses in healthcare, government services, and financial services have regulatory and ethical obligations to provide accessible customer service — voice chatbots fulfill this requirement more naturally than any text alternative.

4. High-Volume Phone Support Deflection

Businesses receiving thousands of phone calls daily can deploy voice chatbots as the first point of contact. The voice bot handles routine queries (account balance, appointment confirmation, order status) and only transfers to a human agent for complex issues. This typically deflects 40-60% of call volume, saving $3-8 per deflected call.

For a call center handling 5,000 calls/day, deflecting 50% at $5/call savings equals $12,500/day or $4.5 million annually.

5. Outbound Calling Campaigns

Voice chatbots handle outbound calls for appointment reminders, payment collections, survey administration, and lead qualification. They make 10,000+ calls simultaneously — something no human team can match. The analytics dashboard tracks connection rates, completion rates, and outcomes across campaigns in real time.

Voice Chatbot Cost Breakdown: What to Budget in 2026

Voice chatbot pricing is more complex than text chatbot pricing because of the additional technology layers involved. Here is a transparent breakdown of what you will actually pay.

Platform and Development Costs

| Cost Category | Low End | Mid Range | Enterprise |

|---|---|---|---|

| Platform subscription | $200/mo | $500-800/mo | $1,500-2,000/mo |

| Initial development/setup | $2,000-5,000 | $10,000-25,000 | $50,000-150,000 |

| ASR/TTS processing | $0.004-0.006/15 sec | $0.006-0.01/15 sec | Custom pricing |

| Telephony (if phone-based) | $0.01-0.03/min | $0.03-0.05/min | $0.02-0.04/min (volume) |

| Monthly maintenance | $500-1,000 | $1,000-3,000 | $3,000-10,000 |

Understanding Per-Minute Costs

Voice chatbots have a significant variable cost component that text chatbots do not: per-minute charges for speech processing and telephony. A typical voice chatbot conversation lasts 2-4 minutes. At $0.08-0.15 per minute (combined ASR + TTS + telephony), each conversation costs $0.16-0.60 in processing alone.

Compare this to text chatbot conversations that cost $0.001-0.01 per interaction. At scale, this difference matters enormously:

- 10,000 conversations/month via voice: $1,600-6,000 in processing costs

- 10,000 conversations/month via text: $10-100 in processing costs

This is why the decision between voice and text should be driven by use case value, not technology preference. Voice makes sense when the use case justifies the higher per-interaction cost.

Total Cost of Ownership by Scale

Small business (500-2,000 voice interactions/month):

- Platform: $200-400/mo

- Processing: $100-300/mo

- Total: $300-700/month

Mid-market (5,000-20,000 interactions/month):

- Platform: $500-1,000/mo

- Processing: $500-2,000/mo

- Total: $1,000-3,000/month

Enterprise (50,000+ interactions/month):

- Platform: $1,500-2,000/mo

- Processing: $3,000-8,000/mo

- Total: $4,500-10,000/month

Hidden Costs to Watch For

Several costs often surprise businesses after deployment:

- Voice tuning and optimization: Voice chatbots require 2-3x more ongoing tuning than text chatbots to handle new accents, phrasings, and edge cases

- Custom voice creation: If you want a branded voice instead of a generic TTS voice, expect $5,000-20,000 for a custom neural voice

- Compliance recording and storage: Industries like finance and healthcare require call recording, adding storage costs

- Fallback staffing: You still need human agents for escalations — voice chatbots reduce but do not eliminate staffing needs

Technical Requirements for Deploying a Voice Chatbot

Deploying a voice chatbot involves more technical complexity than a text chatbot. Here is what your team needs to plan for.

Infrastructure Requirements

For phone-based voice chatbots:

- SIP trunking provider or CPaaS platform (Twilio, Vonage, Bandwidth) for telephone connectivity

- Phone numbers (local, toll-free, or international) — $1-5/month per number plus per-minute charges

- Low-latency cloud hosting — voice chatbots are latency-sensitive; every 100ms of added delay degrades the user experience. Host processing in the same region as your primary customer base

- Redundancy and failover — phone systems have higher availability expectations (99.99%) than web chatbots because callers cannot simply refresh the page

For web-based voice chatbots (in-browser):

- WebRTC or WebSocket implementation for real-time audio streaming

- Client-side microphone access and permission handling

- Echo cancellation and noise suppression (especially important for mobile browsers)

- Fallback to text for browsers that do not support audio or users who deny microphone access

Integration Requirements

Voice chatbots need the same backend integrations as text chatbots — CRM, order management, knowledge base, third-party tools — plus voice-specific integrations:

- Call recording and transcription storage: For quality assurance, compliance, and training data

- Real-time agent handoff: When the voice bot escalates, the call must transfer seamlessly with full context. The human agent should see the conversation transcript and extracted data on their screen as the call transfers

- DTMF handling: Some callers will press buttons out of habit. The voice chatbot should gracefully handle touchtone input alongside voice input

- Outbound dialing: If using the voice bot for outbound calls, integrate with your dialer and comply with TCPA and local calling regulations

Data and Training Requirements

Voice chatbots need more training data than text chatbots because spoken language differs from written language:

- Speech patterns: People say "uh, yeah, so I got this thing in the mail about my bill" — not "I have a question about my invoice." Train your NLU on actual spoken transcripts, not written FAQ pairs.

- Accent coverage: If you serve a diverse customer base, test and optimize for major accent groups. ASR accuracy can vary 5-15% across accents without proper tuning.

- Noise robustness: Test with background noise — car traffic, office chatter, TV in the background. Real callers are rarely in quiet rooms.

- Interruption handling (barge-in): Callers will interrupt the bot mid-sentence. The system needs to detect interruptions, stop speaking, and listen — just like a human would.

Plan for 4-8 weeks of development and testing for a basic voice chatbot, or 3-6 months for an enterprise deployment with complex integrations and multi-language support.

Decision Framework: When to Choose Voice vs Text Chatbots

Use this framework to determine whether your business needs a voice chatbot, a text chatbot, or both. The decision depends on five factors.

Factor 1: Customer Context

Choose voice when:

- Customers are driving, cooking, or have their hands occupied

- Your primary audience is elderly or has accessibility needs

- Customers are already calling you by phone (existing phone channel)

- The interaction happens in a physical space (drive-through, kiosk, retail store)

Choose text when:

- Customers are browsing your website or app

- Customers are in public or noisy environments

- The interaction involves visual information (product images, order details, maps)

- Customers are already messaging you on WhatsApp, Instagram, or Messenger

Factor 2: Information Complexity

Choose voice when:

- Queries are simple and conversational ("What are your hours?" "Where is my order?")

- Responses are short (1-2 sentences)

- No visual comparison is needed

Choose text when:

- Responses involve lists, tables, or comparisons

- Users need to share images, documents, or screenshots

- The conversation requires rich media like carousels, buttons, or product cards

- Users need to review and reference previous messages

Factor 3: Volume and ROI

Voice chatbots make financial sense at specific volume thresholds:

- Under 500 voice interactions/month: Likely not cost-justified. Use text chatbots with a simple phone forwarding setup.

- 500-5,000 interactions/month: ROI positive if each voice interaction has a value of $5+ (lead qualification, order taking, appointment booking).

- 5,000+ interactions/month: Strong ROI territory. The per-interaction cost decreases with volume, and the staffing savings from call deflection become significant.

Factor 4: Existing Channel Mix

If 70%+ of your customer interactions already happen via phone, a voice chatbot is a natural extension. If most interactions are web or messaging, start with text chatbots and add voice later if phone volume warrants it.

Factor 5: Competitive Landscape

In industries where phone support is the norm (healthcare, financial services, telecom, government), voice chatbot adoption is accelerating. If competitors are deploying voice AI, you risk falling behind on customer experience. In digital-first industries (SaaS, e-commerce, media), text chatbots remain the priority.

The Hybrid Approach

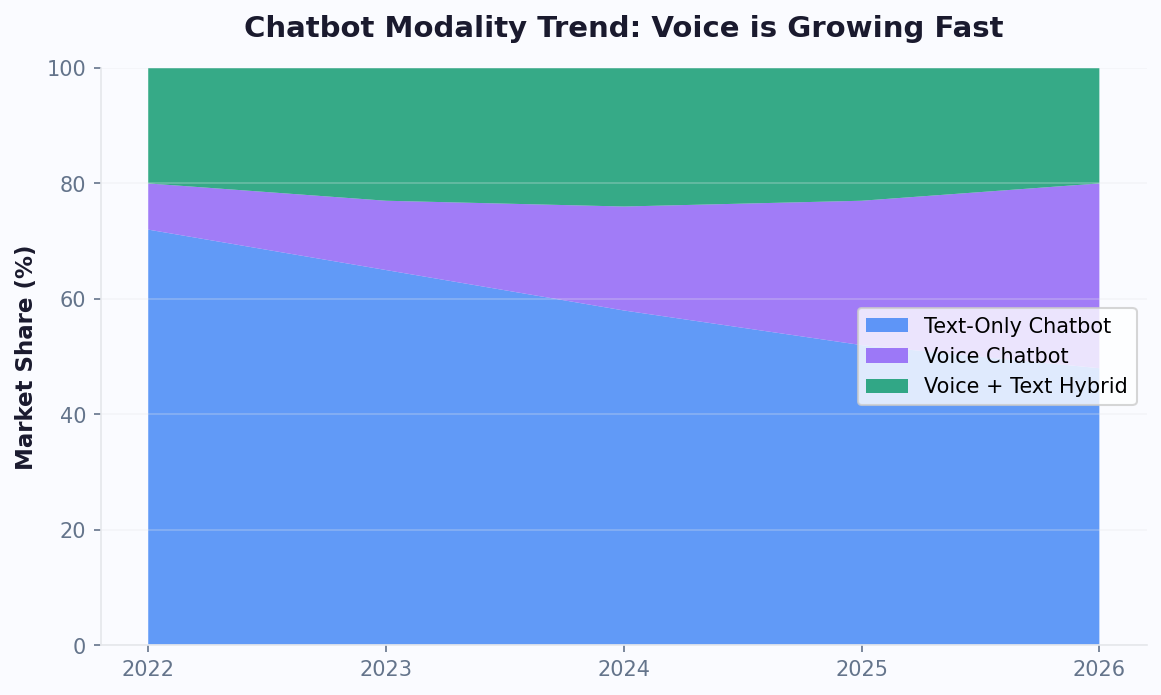

Most businesses in 2026 benefit from a hybrid strategy: text chatbots as the primary channel (lower cost, higher information density) with voice chatbots for specific high-value use cases. Start with text, measure performance with chatbot analytics, identify where voice would add value, and expand incrementally.

The Future of Voice AI: What Is Coming in 2026-2028

Voice chatbot technology is evolving faster than any other AI application area. Here is what the next 24 months hold and how to prepare.

Emotion-Aware Voice AI

Current voice chatbots understand what you say. Next-generation systems will understand how you say it. Emotion detection from voice — analyzing pitch, pace, volume, and speech patterns — is reaching commercial viability. By late 2026, expect voice chatbots that:

- Detect frustration and automatically escalate to a human agent before the caller asks

- Adjust tone and speaking pace to match the caller's emotional state

- Identify high-urgency calls (distress, anger, confusion) and prioritize routing

Early deployments in healthcare and crisis hotlines are already showing 85%+ accuracy in detecting caller emotional states. Commercial customer service applications are 12-18 months behind.

Hyper-Realistic Synthetic Voices

Text-to-speech quality has crossed the uncanny valley. In blind tests, listeners can no longer reliably distinguish between premium synthetic voices and human recordings. The next frontier is personalized voices — brands will create distinctive AI voices that become part of their identity, the way a jingle or tagline does.

Custom neural voices require just 30-60 minutes of recorded speech to clone. Ethical frameworks and regulations around voice cloning are emerging, with the EU AI Act requiring clear disclosure when a caller is interacting with an AI — but the quality of the voice itself is no longer a barrier.

Multimodal Conversations

The voice-only versus text-only distinction is dissolving. Multimodal chatbots combine voice, text, and visual elements in a single conversation. A customer starts by speaking, the chatbot responds with voice while simultaneously showing a product image on their phone screen, and the customer taps a button to confirm their selection while continuing to speak.

This is particularly powerful for:

- Technical support: Customer describes the problem by voice; chatbot shows a visual troubleshooting guide

- Shopping: Customer asks for recommendations by voice; chatbot shows product carousels with images and prices

- Healthcare: Patient describes symptoms by voice; chatbot displays appointment availability as a visual calendar

Edge Processing and Offline Voice AI

Current voice chatbots require cloud connectivity for ASR and TTS processing. Edge AI advances are enabling on-device voice processing that works without internet connectivity. This enables voice chatbots in:

- Elevators, parking garages, and basements with no cellular coverage

- Aircraft and cruise ships with limited connectivity

- Rural areas with unreliable internet

- High-security environments where data cannot leave the premises

How to Prepare

Do not wait for the future to arrive — build on today's proven technology and architect for tomorrow:

- Start with text chatbots on WhatsApp and your website to build your conversation design foundation and training data

- Add voice for high-value use cases where the ROI is clear (IVR replacement, outbound calling, accessibility)

- Collect and organize conversation data — every text and voice interaction trains future models

- Choose platforms with voice roadmaps that support multimodal conversations, so you can expand without rebuilding

- Stay compliant — regulatory requirements around AI disclosure and voice data privacy are tightening. Build disclosure and consent into your flows from day one

The businesses that will lead in voice AI are not the ones that wait for perfect technology. They are the ones building conversational AI foundations today and expanding as the technology matures.

Was this article helpful?

Voice Chatbots for Business FAQ

Everything you need to know about chatbots for voice chatbots for business.

About the Author

Conferbot Team specializes in conversational AI, chatbot strategy, and customer engagement automation. With deep expertise in building AI-powered chatbots, they help businesses deliver exceptional customer experiences across every channel.

View all articles